It is known that Archimedes created a game/puzzle with the dissection of a square into 14 pieces. The object of the puzzle is to put the pieces back together to form a square. A more difficult question, unknown for over 2000 years, is how many unique ways are there of putting the pieces together to form a square. Bill Cutler used a computer program to show that there are 536 unique ways to assemble the pieces not counting similar rotations and reflections. All 536 solutions are visible in this article from Ed Pegg's web site.

The Archimedes Palimpsest is a parchment codex palimpsest, which originally was a 10th-century Byzantine Greek copy of an otherwise unknown work of Archimedes of Syracuse and other authors. It was overwritten with a Christian religious text by 13th-century monks. The erasure was incomplete, and Archimedes' work is now readable after scientific and scholarly work from 1998 to 2008 using digital processing of images produced by ultraviolet, infrared, visible and raking light, and X-ray.

The Palimpsest is the only known copy of "Stomachion". The origin of the puzzle's name is unclear, and it has been suggested that it is taken from the ancient Greek word for throat or gullet, stomachos (στόμαχος). Ausonius refers to the puzzle as Ostomachion, a Greek compound word formed from the roots ofὀστέον (osteon, bone) and μάχη (machē – fight). The puzzle is also known as the Loculus of Archimedes or Archimedes'Box. Loculus seems to be a word related to the division of a tomb area into small chambers for different bodies and is related to the diminutive of locus for a point or place, thus "a little place". (I don't yet get the connection between the puzzle and stomach. Bob Mrotek pointed out to me in a comment that the Stomahion may be a later condesation of the Greek Ostomachion, Ὀστομάχιον, "a word meaning a fight (μάχη, mákhion) with bones (ὀστέον, ostéon) in reference to the pieces which were often made out of ivory." Which now appears as well on Wikipedia.)

The puzzle is sold by Kadon as Archimedes Square:

Amazingly, the next "put-together" puzzle of geometric shapes didn't appear until 1742. The wisdom plates of Sei Shonagon is a seven piece puzzle. They appeared in China 71 years before the more famous Tangram puzzle. The second edition of this puzzle, published in 1743, is the oldest known surviving puzzle of this type.

*http://www.indiana.edu

Tangram is a name of a Chinese puzzle of seven pieces that became popular in Europe around the middle of the 19th century. It seems to have been brought back to England by Sailors returning from Hong Kong. The origin of the name is not definite. One theory is that it comes from the Cantonese word for chin. A second is that it is related to a mispronunciation of a Chinese term that the sailors used for the ladies of the evening from whom they learned the game. [Concubines on the floating brothels of Canton, Hong Kong, and many other ports belonged to an ethnic group called the Tanka whose ancestors came from the interior of the country to become fishermen and pearl divers. They were considered as non-chinese by the govenments of China until 1731. They were unique among Chinese women in refusing to have their feet bound. ] A third suggestion is that it is from the archaic Chinese root for the number seven, which still persists in the Tanabata festival on July seventh in Japan which celebrates the reunion of the weaver (vega) and the herdsman (altair). David Singmaster, below, suggests the name was made up by puzzle master Sam Loyd, but I favor Harvard President Thomas Hill (below) . Whatever the origin of the name, the use of the seven shapes as a game in China were supposed to date back to the origin of the Chou dynasty over one thousand years before the common era. The Chinese name is Ch'i ch'iao t'u which translates, so I am told, as "ingenious plan of seven".

It appears however, that the game and the name are both much more modern than believed. From the MathPuzzle.com website, I found that " The Tangram was invented between 1796 and 1802 in China by Yang-cho-chu-shih. He published the book Ch'i ch'iao t'u (Pictures using seven clever pieces). The first European publication of Tangrams was in 1817. The word Tangram itself was coined by Dr. Thomas Hill in 1848 for his book Geometrical Puzzles for the Young. He became the president of Harvard in 1862, and also invented the game Halma.

When Tangrams hit Europe they were an immediate success. A puzzle museum on-line boasts a collection of a dozen books which were all written within a year of 1817 when tangrams were supposedly introduced into Europe:

Here is some history of the game by David Singmaster, one of the world's foremost authorities on recreational mathematics,

TANGRAMS. These are traditionally associated with China of several thousand years ago, but the earliest books are from the early 19C and appear in the west and in China at about the same time.

(although the image below with tangram problems to create was printed in Japan in 1795, and Utamaro’s “Tagasode” is a famous 1804 Japanese blockprint that shows Tagasode and her servent trying to solve Tangrams), Indeed the word 'tangram' appears to be a 19C American invention (probably by Sam Loyd). A slightly different form of the game appears in Japan in a booklet by Ganreiken in 1742. Takagi says the author's real name is unknown, but Slocum & Botermans say it was probably Fan Chu Sen. There is an Utamaro woodcut of 1780 showing some form of the game (not yet seen by me). I have seen a 1786 print - Interior of an Edo House, from The Edo Sparrows or Chattering Guide - that may show the game. Needham says there are some early Chinese books, and van der Waals' historical chapter in Elffers' book Tangram cites a number with the following titles.

Ch'i Ch'iao ch'u pien ho-pi. >1820.

Ch'i Ch'iao hsin p'u. 1815 and later.

Ch'i Ch'iao pan. c1820.

Ch'i Ch'iao t'u ho-pi. Introduction by Sang-Hsi Ko. 1813 and later. remarks inserted from a description of the book in Tangram by Joost Elffers, {Located in the Leiden Library #6891: This book, with an introduction by Sang-Hsi Ko, is, as far as is known, the oldest example of a Chinese game-book. ] I would like to see some of these or photocopies of them. I would also be interested in seeing antique versions of the game itself. The only historical antecedent is the 'Loculus of Archimedes', a 14 piece puzzle known from about -3C to 6C in the Greek world. Could it have travelled to China? I found a plastic version of the Loculus on sale in Xian, made in Liaoning province. I wrote to the manufacturer to get more, but have had no reply.

For the 10th International Puzzle Party, Naoki Takashima sent a reproduction of a 1881 Japanese edition of an 1803 Chinese book on Tangrams which he says is the earliest known Tangram book.

Jean-Claude Martzloff found some some drawings of tangram-like puzzles from a 1727 booklet Wakoku Chie-kurabe, reproduced in Akira Hirayama's T“zai S–gaku Monogatari Heibonsha of 1973. Takagi has kindly sent his reprint of this booklet, but I am unsure as to the author, etc.

*Utamaro’s “Tagasode” http://www.indiana.edu

The "Ganriken" mentioned in Dr. Singmaster's post was a pseudonym, and it seems unclear who the actual author was. The book was titled "Sei-Shonagon Chie-no-Ita", which translates to "the ingenious pieces of Sei Shonagon". From Wikipedia, "Sei Shonagon was a lady-in-waiting at the Japanese Imperial Court in the beginning of the 11th Century. She kept a personal diary of sorts in which she wrote down her experiences but mainly her feelings. Such diaries were common at the time and were called pillow books because these books were often kept next to people's pillows in which they would write their experiences and observations. The Pillow Book of Sei Shonagon gives an invaluable insight into the world of the Imperial Court of Kyoto a thousand years ago. Sei Shonagon's observations are witty, wry, poignant, and at times condescending."

Tangrams received another boost in popularity when Charles Dodgson, writing as Lewis Carroll, used them to create illustrations of the Characters in the "Alice" books. In the Penguin Books translation of Tangram by Joost Elffers he states that English Puzzle writer H. E. Dudney purchased a copy of a play-book called The Fashionable Chinese Puzzle from Dodgeson's estate. This book seems to be the most common source of the assertion that Napoleon was an avid Tangram player,

And recently I found this beautiful set of 19th Century Tangram dishes made in China,which are in the Hikimi Town Puzzle Museum in Japan.

Monday, 31 December 2012

Sunday, 30 December 2012

Saturday, 29 December 2012

One D is not enough, two is too many

Alexander R Todd, who became Lord Todd of Trumpington (an ancient village in the city of Cambridge which is mentioned in the Canterbury Tales) was considered one of the most authoritarian department chairs in Cambridge University. He held the chair in Organic Chemistry as the result of his incredible breakthroughs in work with the synthesis of nucleotides that form DNA which led to his Nobel Prize in 1957.

He was admired and respected; perhaps even feared, but in such a community no one was above a little fun-poking. The British describe it as, "taking the Mickey out of someone."

For Todd it came in the traditional British form of poetic jingles and clerihews. One common one went thus:

Do you not think it odd

That a commonplace fellow like Todd

Should spell, if you please,

His name with two d's,

When one is sufficient for God.

And a similar theme included another Nobel Laureate Nevill Mott:

Alexander Todd

Thinks he's God

But Nevill Mott

Knows he's not.

Lord Alexander Todd (1907 - 1997) was awarded the 1957 Nobel Prize in Chemistry for his work on the the chemical structure of nucleotides and nucleic acids, particularly the phosphate derivatives. Todd is best known for working out the linkage between nucleotides in RNA and DNA but he is also responsible for proving that the sugar moieties are particular ring structures called β-D-ribofuranosides and β-D-deoxyribofuranosides. His lab in Cambridge (UK) synthesized all of the common nucleotides.

Friday, 28 December 2012

Thursday, 27 December 2012

Wednesday, 26 December 2012

Tuesday, 25 December 2012

Monday, 24 December 2012

A Puzzle for Christmas Time

In my recent post about the sum of squares of the natural integers, I mentioned that one of the problems of recreational mathematics related to the series was the problem of counting the number of squares that were in an nxn square array such as the one above.

Joshua Zucker sent an interesting (read more challenging) version of the problem of counting squares by suggesting that instead of an array of squares, we use an array of dots, like the one below.

This kind of array can be more challenging because in addition to squares like the ones below

You can get some turned like this:

And when the array gets a little larger, they start to have some like this:

Since Joshua has had time to think about this and come up with one of his (always) clever answers that makes it look easy (easier?). I will leave this posed as a problem, and invite him to send his approach which I will post at a later date so as not to spoil the fun for the rest of us. I already see that this will go off into some special partitions of integers which means I have to really put my thinking cap on.

In the meantime, enjoy the puzzle and send your responses for solutions for given sizes, or a general method. I'm off to find paper and pencil for a snowy afternoon of mathematical doodling.

A puzzle for you all, from Santa Joshua. Merry Christmas.

Joshua also asked another question in his comment. Is there a geometric visualization for WHY the 2N+1 shows up in the formula. I would love to hear and share your answers about that one as well.

Sunday, 23 December 2012

Saturday, 22 December 2012

Friday, 21 December 2012

My Formula for Series of Squares of Arithmetic Sequence

A new post of this blog is available

https://pballew.blogspot.com/2019/11/over-last-month-most-popular-blog-post.html

Recently while reading Fibonacci's Liber Abaci about the sums of squares I mis-read that he had included a formula for finding the sum of the squares of integers in arithmetic sequence. He didn't; well he did, but only for select sequences that obeyed his special rule.

Fibonacci's rule for the sum of the squares of the first n integers was to the last term times the next term that would be in the sequence (if it was continued), and multiply these by their sum divided by 6. This produces the familiar 12+22+32+...+n2 = (n)(n+1)(2n+1)/6 which appears in all the textbooks.

Fibonacci went on to show that if you wanted to square the first n odd integers then the same rule would apply if we divide by an additional 2. That is 12+32+52+...+n2 = n(n+2)(2n+2)/(12).

He goes on to state that the same rule would work if you had multiples of 3 or 4 and divided by both 6 and the increment between terms. It was this part that I glibly misread to think that it applied to all arithmetic sequences. When I tried it out on a sequence, 12+42+72 I found that 7(10)(17) was not going to be divisible by 6x3. And when I tried other examples, they almost always failed, but on following up with the division it turned out that; although they were not exact, they were always very close.

The true value of the series of squares above turns out to be 66, and the result by my over extension of Fibonacci's rule leads to 66.11111.....

As long as the first term in the sequence was one, it seemed that the floor function of the result was the correct answer. For example, 12+62+112+162 =414

Using 16(21)(37)/(6*5) = 414.4

If you started with a first term other than one, the results were undervalued. 22+52+82 = 93 while the generalized rule would give 8(11)(19)/(6x3) = 92.8888...

32+72+112152 = 404, and the rule gives (15)(19)(34)/(6*4)= 403.75.

I thought I remembered that the early Hindu or Arabic or somebody scholars had a formula for that, but I wasn't sure if it was a simple rule or one developed too late for inclusion in the Liber Abaci, so I flipped open a pad and began to scribble out a little high school level algebra, and rather quickly (for an older guy) came up with what I thought was a somewhat pretty little method. I would later search out an answer not TOOOO different, but thought my notation was much superior for remembering, so here it is; Ballew's formula for the sum of the squares of integers in an arithmetic sequence.

Let F, L, d, and n represent the first term, last term, common difference, and number of terms of an arithmetic Sequence, then the sum of the Squares, S, is given by FLn + d2 (n-1)n(2n-1)/6 . Note the part after the addition sign is simply the sum of the squares of the first n-1 integers times the square of the common difference.

For the 12+62+112+162 =414 we have n=4, d=5, F=1 and L=16. This gives 4(1)(16)+ 52(3)(4)(7)/6 =

If we started somewhere higher, 252+312+372 +...+492= 7205. Using n=5, d=6, F=25 and L=49 we get (5)(25)(49)+62 (4)(5)(9)/6 = Yep, you guessed it, 7205.

Checking on the history of squaring arithmetic sequences, I found that as early as 850, Mahavira's Ganita Sara Sangraha contained a much more complicated formula :

The sum is given as 8065. Using my n=10, d= 5, F= 2 and L= 47 gives (10)(2)(47) + 25(9)(10)(19)/6 which is also 8065.

I did find another version somewhat later that was more similar to mine, but still not as nice (don't you agree?) in the work of Narayana Pandita from the 14th Century (perhaps you remember his problem of Cows and calves, something to write on later).

His result looks like this:

Note he also has the square of the difference on the right multiplied by the sum of n-1 squares.

Before I close on the topic, while scrolling through these old scholar's work I did find a really simple formula for the sum of the cubes of an arithmetic sequence.

The formula uses S as the sum of the simple arithmetic sequence. Today we might write S=n (F+L) /2 . The sum of the cubes of that sequence is given by S2 + SF(F-d)

For the case of S= 2+5+ 8 + 11 = 26, and 23 + 5 3+83+113 = 1976 the method says to use 2623+26(2)(-1) = 1976.

Note that for the natural sequence with a = 1 and d=1, the last term disappears to give S2 which is the textbook formula.

https://pballew.blogspot.com/2019/11/over-last-month-most-popular-blog-post.html

Recently while reading Fibonacci's Liber Abaci about the sums of squares I mis-read that he had included a formula for finding the sum of the squares of integers in arithmetic sequence. He didn't; well he did, but only for select sequences that obeyed his special rule.

Fibonacci's rule for the sum of the squares of the first n integers was to the last term times the next term that would be in the sequence (if it was continued), and multiply these by their sum divided by 6. This produces the familiar 12+22+32+...+n2 = (n)(n+1)(2n+1)/6 which appears in all the textbooks.

Fibonacci went on to show that if you wanted to square the first n odd integers then the same rule would apply if we divide by an additional 2. That is 12+32+52+...+n2 = n(n+2)(2n+2)/(12).

He goes on to state that the same rule would work if you had multiples of 3 or 4 and divided by both 6 and the increment between terms. It was this part that I glibly misread to think that it applied to all arithmetic sequences. When I tried it out on a sequence, 12+42+72 I found that 7(10)(17) was not going to be divisible by 6x3. And when I tried other examples, they almost always failed, but on following up with the division it turned out that; although they were not exact, they were always very close.

The true value of the series of squares above turns out to be 66, and the result by my over extension of Fibonacci's rule leads to 66.11111.....

As long as the first term in the sequence was one, it seemed that the floor function of the result was the correct answer. For example, 12+62+112+162 =414

Using 16(21)(37)/(6*5) = 414.4

If you started with a first term other than one, the results were undervalued. 22+52+82 = 93 while the generalized rule would give 8(11)(19)/(6x3) = 92.8888...

32+72+112152 = 404, and the rule gives (15)(19)(34)/(6*4)= 403.75.

I thought I remembered that the early Hindu or Arabic or somebody scholars had a formula for that, but I wasn't sure if it was a simple rule or one developed too late for inclusion in the Liber Abaci, so I flipped open a pad and began to scribble out a little high school level algebra, and rather quickly (for an older guy) came up with what I thought was a somewhat pretty little method. I would later search out an answer not TOOOO different, but thought my notation was much superior for remembering, so here it is; Ballew's formula for the sum of the squares of integers in an arithmetic sequence.

Let F, L, d, and n represent the first term, last term, common difference, and number of terms of an arithmetic Sequence, then the sum of the Squares, S, is given by FLn + d2 (n-1)n(2n-1)/6 . Note the part after the addition sign is simply the sum of the squares of the first n-1 integers times the square of the common difference.

For the 12+62+112+162 =414 we have n=4, d=5, F=1 and L=16. This gives 4(1)(16)+ 52(3)(4)(7)/6 =

If we started somewhere higher, 252+312+372 +...+492= 7205. Using n=5, d=6, F=25 and L=49 we get (5)(25)(49)+62 (4)(5)(9)/6 = Yep, you guessed it, 7205.

Checking on the history of squaring arithmetic sequences, I found that as early as 850, Mahavira's Ganita Sara Sangraha contained a much more complicated formula :

The sum is given as 8065. Using my n=10, d= 5, F= 2 and L= 47 gives (10)(2)(47) + 25(9)(10)(19)/6 which is also 8065.

I did find another version somewhat later that was more similar to mine, but still not as nice (don't you agree?) in the work of Narayana Pandita from the 14th Century (perhaps you remember his problem of Cows and calves, something to write on later).

His result looks like this:

Note he also has the square of the difference on the right multiplied by the sum of n-1 squares.

Before I close on the topic, while scrolling through these old scholar's work I did find a really simple formula for the sum of the cubes of an arithmetic sequence.

The formula uses S as the sum of the simple arithmetic sequence. Today we might write S=n (F+L) /2 . The sum of the cubes of that sequence is given by S2 + SF(F-d)

For the case of S= 2+5+ 8 + 11 = 26, and 23 + 5 3+83+113 = 1976 the method says to use 2623+26(2)(-1) = 1976.

Note that for the natural sequence with a = 1 and d=1, the last term disappears to give S2 which is the textbook formula.

Thursday, 20 December 2012

Wednesday, 19 December 2012

Some Notes on the Sum of Squares of the Integers

Recently I have been talking about the pre-calc use of the series formed by the powers of integers, and I wanted to focus a little on the one above for the sums of the squares of the integers, a bit of the history of the relation, and a little history about couple of problems related to the identity. When I was first confronted with the expression the 2n+1 term seemed strangely out of place to me, and over the years it seems that students, and others also found the sequence to be a little quirky.

The traditional high school approach tends to be to advise the student to memorize the relationship, and then prove it with induction. I find great joy in inductive proofs, and think they are a wonderful tool, but I don't think they either a) help in remembering the identity, or b) make it understandable why the 2n+1 term pops up.

I'm not sure my way of explaining how one might come up with the identity from scratch in a way that ties together logically for most students is any more of an explanation for 2n+1, but it does seem to supply students with a way to produce the sum from scratch.

It is unknown who first came up with the identity, but it was certainly known, and beautifully used, by Archimedes. Archimedes used the sums of squares to find the area inside a spiral.

It was expressed pretty much the way we do it now without the notation by several of the Arabic language scholars, and Fibonacci gives the sum in his Liber Abacci using the first ten integers and gives 10 (11)21/6 as the solution. Actually he divides by both six and one to make a point as he follows with a method for finding the sums of the squares of the odd integers.

Fibonacci explains that you multiply by n (in his example 10), times its successor (n+1) times their sum .... aha, a reason for the 2n+1 and divide by six, and then by one because one is the difference in ten and eleven.

For the sum of squares of the odd numbers up through nine he offers the same sort of approach; you multiply the last number (9) times its successor (in this case 11) times their sum (20) and then divide by six, and then by two because it is the difference between the numbers. He goes on to apply his method to the sum of squares of general arithmetic sequences,but I digress. Read the master at your leisure. As was common for the time, no general reason is given for the method, and no proof other than the examples given was provided.

When I was pressed to try and make this understandable to high school students, I returned to the ancient Pythagorean knowledge that the square numbers were the sum of two successive triangular numbers.

In the image the 4th and 5th triangular numbers are shown combined to make the square of five.

I chose the triangular numbers because it is easy to show that the triangular numbers can be expressed as (N+1)C 2 {the fourth triangular number, 10, is given by 5C2 for example} and read off Pascal's triangle, a "friendly" mystery to most high school students.

More importantly, if you sum the first n triangular number they can be easily expressed as (N+2)C3. The sum of the first four triangular numbers, 1 + 3 + 6 + 10 = 20 is equal to (4+2) C 3.

Now once we have the fact that every square is the sum of two consecutive triangular numbers and that triangular numbers are easy to sum using combinations, we can proceed to show the squares broken into triangular numbers as below:

1 ................. 1

4 .................. 3 + 1

9 .................. 6 + 3

16 .................10 + 6

_________________________________

30 ................. 20 + 10

So the sum of the first four squares is given by (4+2)C 3 + (3+2)C 3

We can generalize this using simple algebra to produce

12+22+32+...+n2 = (n+2)C 3 + (n+1)C 3

= (n+2)(n+1)n/6 + (n+1)(n)(n-1)/6

and factoring out the (n+1) and n in both terms we get

= (n+1)(n) ((n+2)+(n-1) ) /6 (ahhhh see the 2n+1 now chiledren???)

= n(n+1)(2n+1)/6

And then it finally made its way into recreational mathematics.

As far as I can find the first use of the sum of squares in recreational mathematics came about because of a question by the famous Sir Walter Raleigh to his mathematical aide, Thomas Harriot. He supposedly asked Harriot around 1600 how he could quickly compute the number of cannonballs in a stack in the form of a square pyramid (like the one at top). Harriot gave him the answer, but the interest in the problem led to a second question, is there any square pyramidal number which is itself a square? Of course 1 is a trivial example, but were there more? It turns out that there was, at least one more. It turns out that if you sum the first 24 squares, you get a total which is also a square. But were there any more?

The famous recreational mathematician, Edouard Lucas (father of the Towers of Hanoi puzzle) conjectured in 1875 conjectured that there were no others, but the proof waited until G. N. Watson found a complete proof in 1918.

Within another decade, the famous son of Sam Loyd, also named Sam, released the first puzzle in which you were challenged to count the total number of squares in a square grid.

In the image above it is easy to see the relation to the sum of squares; there are nine squares that are 1x1, 4 that are 2x2, and1 that is 3x3, for a total of 32+22+12 = 13 squares. (Of course Loyd's puzzle was somewhat more difficult).

If anyone knows another classic puzzle that invokes the sum of squares I would love to know about it.

A related follow up post prompted by the comment below of Joshua Zucker.

Tuesday, 18 December 2012

Monday, 17 December 2012

Sums of Powers of Integer Sequences

A short while back I mentioned David Well's new book, Games and Mathematics, and talked about the beautiful theorem of Liouville about the sets of numbers for which the sum of the numbers cubed is equal to the square of their sum.

One of the sets is the set of positive integers. (13+23+33+... +n3)= (1+2+3+...+n)2.

This is one of three identities (the sum of the integers, the sum of the squares of the integers, and the sum of the cubes of the integers) that show up in pre-calc classes that students are often challenged to memorize, and prove by induction.

I was surprised recently to learn that the simple method I always showed my students was NOT the way the ancients first knew this expression.

I had always assumed that due to their incredible interest in figurate numbers, the early Greeks/Romans/Arabs knew that if any two consecutive triangular numbers are squared, their difference is a cube. 32 - 12 = 23, and if we jump up to the 5th triangular number 15, and the 4th, 10, and take the difference of their squares, the result is 152 - 102 = 53 or 125. It's even pretty easy to show that the result is true wtih algebra using the (well known to the Greeks) fact that the Nth triangular number is 1/2 (n)(n+1).

If we use the notation T(n) to represent the nth triangular number then we can write 13+23+33 +... + n3 as

T2(1) + T2(2)-T2(1) + .... + T2(n)-T2(n-1) and it is clear that every term except the last cancels, giving us the sumo of the first n cubes is the square of the nth triangular number.

But it seems that Nichomachus, who lived around 100 AD, presented the sums of cubes by looking at the sequence of odd numbers which he called the gnomens of squares. (A gnomen is the greek name for a device similar to today's common carpenter's square. If you take a square array of points, such as 3 2shown, adding the fourth odd number, 7, produces the next square. This arrangement of points or squares was called a gnomen.

As early as the Pythagoreans it was well known that the sequence of n odd numbers produced a square number. 1+3+5+7 =42

To discuss the sum of cubes, Nichomachus points out that if you start with 1,3,5,7,9,11,13... you note that the first (1) is a cube, and the next two (3+5=8) are a cube, and the next three, 7+9+11 = 27 are a cube. As each cube is added, we add additional gnomens to maintain a square number.

Since the number of gnomens added was a triangular number, 1 + 2 + 3 etc odd gnomens, they must add up to the square of a triangular number, and as pointed out above, they had known for half a millennium that the first n triangular numbers added up to 1/2 (n)(n+1) and so the square of that number, would be the same as the sum of the first n cubes.

I wanted to point out a little about the history of the sum of the squares of the integers, which seems to include a very non-intuitive 2n+1 term that seems to be out of place, and a general way I use to derive that formula that students seen not to see, but I will save that for a day or two away.

One of the sets is the set of positive integers. (13+23+33+... +n3)= (1+2+3+...+n)2.

This is one of three identities (the sum of the integers, the sum of the squares of the integers, and the sum of the cubes of the integers) that show up in pre-calc classes that students are often challenged to memorize, and prove by induction.

I was surprised recently to learn that the simple method I always showed my students was NOT the way the ancients first knew this expression.

I had always assumed that due to their incredible interest in figurate numbers, the early Greeks/Romans/Arabs knew that if any two consecutive triangular numbers are squared, their difference is a cube. 32 - 12 = 23, and if we jump up to the 5th triangular number 15, and the 4th, 10, and take the difference of their squares, the result is 152 - 102 = 53 or 125. It's even pretty easy to show that the result is true wtih algebra using the (well known to the Greeks) fact that the Nth triangular number is 1/2 (n)(n+1).

If we use the notation T(n) to represent the nth triangular number then we can write 13+23+33 +... + n3 as

T2(1) + T2(2)-T2(1) + .... + T2(n)-T2(n-1) and it is clear that every term except the last cancels, giving us the sumo of the first n cubes is the square of the nth triangular number.

But it seems that Nichomachus, who lived around 100 AD, presented the sums of cubes by looking at the sequence of odd numbers which he called the gnomens of squares. (A gnomen is the greek name for a device similar to today's common carpenter's square. If you take a square array of points, such as 3 2shown, adding the fourth odd number, 7, produces the next square. This arrangement of points or squares was called a gnomen.

As early as the Pythagoreans it was well known that the sequence of n odd numbers produced a square number. 1+3+5+7 =42

To discuss the sum of cubes, Nichomachus points out that if you start with 1,3,5,7,9,11,13... you note that the first (1) is a cube, and the next two (3+5=8) are a cube, and the next three, 7+9+11 = 27 are a cube. As each cube is added, we add additional gnomens to maintain a square number.

Since the number of gnomens added was a triangular number, 1 + 2 + 3 etc odd gnomens, they must add up to the square of a triangular number, and as pointed out above, they had known for half a millennium that the first n triangular numbers added up to 1/2 (n)(n+1) and so the square of that number, would be the same as the sum of the first n cubes.

I wanted to point out a little about the history of the sum of the squares of the integers, which seems to include a very non-intuitive 2n+1 term that seems to be out of place, and a general way I use to derive that formula that students seen not to see, but I will save that for a day or two away.

Sunday, 16 December 2012

Saturday, 15 December 2012

Friday, 14 December 2012

Thursday, 13 December 2012

Wednesday, 12 December 2012

Parabolas, Tangents, and the Wallace-Simson Line

The oft-called Simson line was attributed to Simson by Poncelet, but is now frequently known as the Wallace-Simson line since it does not actually appear in any work of Simson. (Oh go on, ask your teacher, so WHY do we still call it the Simson line at all?)

The Wallace for whom the line should more probably be named is William Wallace FRSE (23 September 1768, Dysart—28 April 1843, Edinburgh; the Scottish mathematician and astronomer who invented the eidograph, a more complicated version of the pantograph used to make scale images of drawings. He was a protegee of John Playfair, and teacher to Mary Somerville. He wrote about the line in 1799. He is also not credited for his 1807 proof of a result about polygons with an equal area, which has become the Bolyai–Gerwien theorem. He was also one of the first in England/Scotland to promote the calculus as taught on the Continent.

The theorem says that if a triangle is inscribed in a circle, then if perpendiculars are dropped from a point on this circumcircle to the three sides of the triangle (extended as needed) the feet of these perpendiculars will lie on a straight line. It works the other way too. If you draw a straight line cutting all three sides of the triangle, perpendiculars drawn at these points of intersection will be concurrent at a point on the circumcircle.

I mentioned recently in a description of David Well's new book, Games and Mathematics, that I keep finding out new stuff. Well, he pointed out a connection between the Wallace line (he uses Simson, but I believe he knows better) and tangents of a parabola.

If you find three tangents to parabola and construct the circumcircle to the triangle formed by their mutual intersections, the circumcircle will pass through the focus of the parabola.

Tricky and cool, but what does that have to do with the the Wallace line? Well if you drop a perpendicular from the focus to ANY tangent, the foot of the perpendicular will always fall on the line tangent to the parabola at the vertex. The tangent at the vertex is a Wallace line for any triangle formed by three tangents to a parabola.

The Wallace for whom the line should more probably be named is William Wallace FRSE (23 September 1768, Dysart—28 April 1843, Edinburgh; the Scottish mathematician and astronomer who invented the eidograph, a more complicated version of the pantograph used to make scale images of drawings. He was a protegee of John Playfair, and teacher to Mary Somerville. He wrote about the line in 1799. He is also not credited for his 1807 proof of a result about polygons with an equal area, which has become the Bolyai–Gerwien theorem. He was also one of the first in England/Scotland to promote the calculus as taught on the Continent.

The theorem says that if a triangle is inscribed in a circle, then if perpendiculars are dropped from a point on this circumcircle to the three sides of the triangle (extended as needed) the feet of these perpendiculars will lie on a straight line. It works the other way too. If you draw a straight line cutting all three sides of the triangle, perpendiculars drawn at these points of intersection will be concurrent at a point on the circumcircle.

I mentioned recently in a description of David Well's new book, Games and Mathematics, that I keep finding out new stuff. Well, he pointed out a connection between the Wallace line (he uses Simson, but I believe he knows better) and tangents of a parabola.

If you find three tangents to parabola and construct the circumcircle to the triangle formed by their mutual intersections, the circumcircle will pass through the focus of the parabola.

Tricky and cool, but what does that have to do with the the Wallace line? Well if you drop a perpendicular from the focus to ANY tangent, the foot of the perpendicular will always fall on the line tangent to the parabola at the vertex. The tangent at the vertex is a Wallace line for any triangle formed by three tangents to a parabola.

Tuesday, 11 December 2012

Games and Mathematics, by David Wells

I recently mentioned The Joy of X as a great gift for the HS student in your life. Today I would add another, in fact several more, that I think would be an excellent gift for a the young mathematician in your life this Christmas.

I have been a fan of David Wells for several years. His Penguin Book of Curious and Interesting Mathematics, The Penguin Book of Curious and Interesting Numbers , and The Penguin Dictionary of Curious and Interesting Geometry,have been favorites for a long time. Now he has just released Games and Mathematics, which may be the best of them all for the talented HS mathematician.

Folks who have followed me for awhile know that I keep up on most of the math books for the masses. I read and enjoy them for the way they present information, and on rare occasion I find some gem tucked in that I had not known before, as I have in this book.

In particular, I learned about a theorem by Joeseph Liouville that seems to tie together one of those topics that I always assumed was just an isolated coincidence in advanced math classes. I apologize to all the kids I let slip by without pointing this one out. I was made even more embarrassed by the fact that the theorem appears in the book Math competition Tutorial (for Grade 6, primary school), which was published by Peking Normal University Publishing Company and written by Sun Ruiqing who is a professor from Beijing Normal University.

The common topic is the fact that the sum of the first n cubes is the square of the sum of the first n integers. 13 + 23 + 33 + ... + n3 = (1 + 2 + 3 + ... + n)2.

What David Wells points out is that this is just a special case of a more general theorem, the one that Liouville discovered. There are an infinite number of number sets which have the property that the sum of their cubes is equal to the square of their sum. Liouville discovered that if you take any number and write its divisors, and then the number of factors for each of these divisors, it turns out that that set of numbers has the property mentioned above.

For example, if we pick the number six, the divisors are 6, 3, 2, and 1. Now if we make a list of the number of factors that each of these numbers have, we see that :

6 has 4 factors, 1,2,3, and 6

3 has 2 factors, 1 and 3

2 has 2 factors, 1 and 2

and 1 has 1 factor, itself.

And the numbers 4, 2, 2, 1 form a set which has the property that 43 + 23 + 23 + 13 = 64+ 8+8+1 = 81 = (4+2+2+1) 2.

And that ALWAYS works. Try it on your favorite number.

How does this specialize into the formula memorized by Pre-Calc students everywhere? Start with a power of a prime, say 35 . Its factors are 35, 34, 33, 32, 31, and 30 and the number of divisors of each of these numbers is 6, 5, 4, 3, 2, and 1, so by Liouville's mathematical discovery, 13 + 23 + 33 + ... + 63 = (1 + 2 + 3 + ... + 6)2. Replace the 5 with n-1 and you have the general identity above.

I think a talented young mathematician would love wandering through Well's discussion of this and other ideas that tend to take them way beyond the hum-drum classroom exercises. And each of the others above is an excellent adventure for them as well.

Make it a Merry Mathematical Christmas for someone, perhaps yourself.

I have been a fan of David Wells for several years. His Penguin Book of Curious and Interesting Mathematics, The Penguin Book of Curious and Interesting Numbers , and The Penguin Dictionary of Curious and Interesting Geometry,have been favorites for a long time. Now he has just released Games and Mathematics, which may be the best of them all for the talented HS mathematician.

Folks who have followed me for awhile know that I keep up on most of the math books for the masses. I read and enjoy them for the way they present information, and on rare occasion I find some gem tucked in that I had not known before, as I have in this book.

In particular, I learned about a theorem by Joeseph Liouville that seems to tie together one of those topics that I always assumed was just an isolated coincidence in advanced math classes. I apologize to all the kids I let slip by without pointing this one out. I was made even more embarrassed by the fact that the theorem appears in the book Math competition Tutorial (for Grade 6, primary school), which was published by Peking Normal University Publishing Company and written by Sun Ruiqing who is a professor from Beijing Normal University.

The common topic is the fact that the sum of the first n cubes is the square of the sum of the first n integers. 13 + 23 + 33 + ... + n3 = (1 + 2 + 3 + ... + n)2.

What David Wells points out is that this is just a special case of a more general theorem, the one that Liouville discovered. There are an infinite number of number sets which have the property that the sum of their cubes is equal to the square of their sum. Liouville discovered that if you take any number and write its divisors, and then the number of factors for each of these divisors, it turns out that that set of numbers has the property mentioned above.

For example, if we pick the number six, the divisors are 6, 3, 2, and 1. Now if we make a list of the number of factors that each of these numbers have, we see that :

6 has 4 factors, 1,2,3, and 6

3 has 2 factors, 1 and 3

2 has 2 factors, 1 and 2

and 1 has 1 factor, itself.

And the numbers 4, 2, 2, 1 form a set which has the property that 43 + 23 + 23 + 13 = 64+ 8+8+1 = 81 = (4+2+2+1) 2.

And that ALWAYS works. Try it on your favorite number.

How does this specialize into the formula memorized by Pre-Calc students everywhere? Start with a power of a prime, say 35 . Its factors are 35, 34, 33, 32, 31, and 30 and the number of divisors of each of these numbers is 6, 5, 4, 3, 2, and 1, so by Liouville's mathematical discovery, 13 + 23 + 33 + ... + 63 = (1 + 2 + 3 + ... + 6)2. Replace the 5 with n-1 and you have the general identity above.

I think a talented young mathematician would love wandering through Well's discussion of this and other ideas that tend to take them way beyond the hum-drum classroom exercises. And each of the others above is an excellent adventure for them as well.

Make it a Merry Mathematical Christmas for someone, perhaps yourself.

Monday, 10 December 2012

Sunday, 9 December 2012

Saturday, 8 December 2012

Friday, 7 December 2012

Thursday, 6 December 2012

An Untapped Source to Boost the Economy

I have recently been receiving some nice graphic posts from a young lady named Jessica who does graphic stats for something called LearnStuff about which I know little. What I do know is that these are the kind of graphics I would have loved to have for my AP Stats classroom to stimulate their thoughts about the subject.

The recent one she sent was about the inequity of women's pay for equal work. Here is one that jumped out at me as we sit and wait for congressional action before the oft touted fiscal cliff to destroy us all.

Wow, now with that increase of income in the US, if any of it went to consumption that could actualy fuel the kind of recovery that would help alleviate the economic problems and debt payments we face. Of course all those women are probably rich white women who would just put their money on their tax shelters off-shore....

or maybe not:

I haven't checked the statistics behind this, but they list sources at the bottom of the site, so you are welcome to follow up and check on these, or ask your students to do the same.

"Graphics created by: LearnStuff.com”

Some History of a Weighty Problem

A while back my blog got a nice mention on Gary Antonick’s “Numberplay” in the New York Times. That seems only fair as I was in the process of writing this post about one of the problems in his column which relates back to a nice old recreational problem from way back.

Gary’s version of the problem reads like this:

This is a complication of the ancient problem in two ways, first by having to divide up the chain to get links, but also because it does not say how the weighing is to be performed.

In recreational history such “balance scale” problems have two basic forms, one in which all the weights have to go on one side to balance the unknown object, and a more clever approach (my bias) that allows known weights to be on both pans of the balance. In the end Gary’s solution goes for the both-sides solution which allows the chain to be cleverly cut in only one place (producing three sections of one, three, and nine lengths) allowing the weighing of any integer weight as requested.(Cut the fourth link and remove it for the one, leaving three on one end of it, and nine on the other)

Searching for older versions of the problem, I quickly found a post in the Problem of the Week section of a 1961 Popular Science.

This one particularly allows the weights to be placed on both pans of the balance.

The solution a month later not only provides the same solution approach as Gary’s column, but also gives a bit of (not quite correct) history of the problem.

The credits to Tartaglia and Bachet are not wrong, they are just not complete. This is understandable because the attributions probably came from one of the outstanding recreational mathematics books of the early 20th century, Mathematical Recreations and Essays by W.W.Rouse BALL, in which he also credits Bachet and Tartaglia:

Both solutions were given over three hundred years earlier than Tartaglia, by Leonardo of Pisa, often known now as Fibonacci. From Sigler’s translation of the famous Liber Abaci I find:

Fibonacci also posted a version avoiding the use of weights in both pans by requiring that the total weight for each increment must be presented on a given day, in this case where each ounce was represented in a valuable metal, requiring the powers of two solution.

From David Singmaster's notes on the Chronology of Recreational Mathematics I found an earlier reference, but do could find no more about the subject named there

"c1075 Tabar_: Mift_h al-mu`_mal_t - first Use of 1,3,9,... as Weights."

If some reader knowledgeable about this source can provide more information I would be very grateful.

Even among those whose historical education has fully informed them of the earlier usages, the use of Bachet’s name is still common, as illustrated in a relatively new article which suggests a newer version of the problem from Edwin O’Shea’s BACHET’S PROBLEM: AS FEW WEIGHTS TO WEIGH THEM ALL

This brings us back to the NY Times version of the problem, but we might ask, how would you split the 13 link chain if you had to be able to pay any amount from 1 to 13 ounces of gold on a given day, as in the Fibonacci's second version of the problem?

Gary’s version of the problem reads like this:

You have a balance scale and a single chain with thirteen links.

Each link of the chain weighs one ounce. How many links of the chain

do you need to break in order to be able to weigh items from 1 to 13 ounces

in 1-ounce increments?

This is a complication of the ancient problem in two ways, first by having to divide up the chain to get links, but also because it does not say how the weighing is to be performed.

In recreational history such “balance scale” problems have two basic forms, one in which all the weights have to go on one side to balance the unknown object, and a more clever approach (my bias) that allows known weights to be on both pans of the balance. In the end Gary’s solution goes for the both-sides solution which allows the chain to be cleverly cut in only one place (producing three sections of one, three, and nine lengths) allowing the weighing of any integer weight as requested.(Cut the fourth link and remove it for the one, leaving three on one end of it, and nine on the other)

Searching for older versions of the problem, I quickly found a post in the Problem of the Week section of a 1961 Popular Science.

This one particularly allows the weights to be placed on both pans of the balance.

The solution a month later not only provides the same solution approach as Gary’s column, but also gives a bit of (not quite correct) history of the problem.

The credits to Tartaglia and Bachet are not wrong, they are just not complete. This is understandable because the attributions probably came from one of the outstanding recreational mathematics books of the early 20th century, Mathematical Recreations and Essays by W.W.Rouse BALL, in which he also credits Bachet and Tartaglia:

Both solutions were given over three hundred years earlier than Tartaglia, by Leonardo of Pisa, often known now as Fibonacci. From Sigler’s translation of the famous Liber Abaci I find:

Fibonacci also posted a version avoiding the use of weights in both pans by requiring that the total weight for each increment must be presented on a given day, in this case where each ounce was represented in a valuable metal, requiring the powers of two solution.

From David Singmaster's notes on the Chronology of Recreational Mathematics I found an earlier reference, but do could find no more about the subject named there

"c1075 Tabar_: Mift_h al-mu`_mal_t - first Use of 1,3,9,... as Weights."

If some reader knowledgeable about this source can provide more information I would be very grateful.

Even among those whose historical education has fully informed them of the earlier usages, the use of Bachet’s name is still common, as illustrated in a relatively new article which suggests a newer version of the problem from Edwin O’Shea’s BACHET’S PROBLEM: AS FEW WEIGHTS TO WEIGH THEM ALL

The generalized Bachet’s problem that we will explore here is that of finding appropriate weights when one replaces 40 with any positive integer. The full generalization, due to Park and studied further by Rødseth, not only tells us the minimum number of parts needed when 40 is replaced by any m but all possible ways to accordingly break up a given m. Furthermore, we can also count the number of distinct ways to break up such an m. For example, when we replace 40 by m = 25 we’ll still need no more than four parts but there are now nine ways to break up 25 to solve Bachet’s problem. Written as partitions with four parts, these are:

25 = 1 + 3 + 9 + 12 = 1 + 3 + 8 + 13 = 1 + 3 + 7 + 14

= 1 + 3 + 6 + 15 = 1 + 3 + 5 + 16 = 1 + 3 + 4 + 17

= 1 + 2 + 7 + 15 = 1 + 2 + 6 + 16 = 1 + 2 + 5 + 17

This brings us back to the NY Times version of the problem, but we might ask, how would you split the 13 link chain if you had to be able to pay any amount from 1 to 13 ounces of gold on a given day, as in the Fibonacci's second version of the problem?

Wednesday, 5 December 2012

Tuesday, 4 December 2012

Monday, 3 December 2012

Antiparallels, An Overlooked HS Geometry Beauty

I would think it is pretty fundamental in typical HS classrooms that students recognize that a line parallel to one of the sides of a triangle will cut the other two sides in a pair of angles which are congruent to the angles formed at the third side. Eventually they can prove that the triangle formed by the parallel line forms a triangle with the two sides similar to the original triangle.

Almost none of them, and perhaps very few of their teachers, know that there is a second type of line which can be drawn to cut the two sides which will also form a similar triangle, and thus must also form angles congruent to the two base angle of the original triangle. Its called the anti-parallel now, but it used to be called a subcontrary line, at least by Apollonius.

There are several nice ways to produce an antiparallel in a triangle. A nice general way is to use the two vertices of one leg and a point on one of the other two legs to construct a circle. The circle will then cut the other leg in a fourth point which is the other vertex of the antiparallel. These four point are the vertices of a cyclic quadrilateral, for which the opposite angles are supplementary. This makes it easy to see that the antiparallel forms angles on one leg congruent to the original angle on the other leg.

A second way is to draw an altitude from two of the vertices to the opposite sides. The segment connecting the feet of these two altitudes is also antiparallel to the third side.

*image from Wolfram Mathworld

If you construct the circumcircle of the triangle, the tangent at the vertex opposite a side will be anti-parallel to that side. (This doesn't strike me as a simple proof, but I may be overlooking something. I often do. If you have a simple proof, high school level for example, I would love to see and share it.)

Just as the median bisects all lines parallel to the base, its reflection in the angle bisector (called the symmedian) will bisect each anti-parallel.

The antiparallel shows up as the solution to an optimization problem that was first proved by Giovanni Fagnano in 1775: For a given acute triangle determine the inscribed triangle of minimal perimeter. Turns out the answer is the triangle formed by the three anti-parallels connecting the feet of the three altitudes, called the orthic triangle.

For slightly more advanced students who have been exposed to cones it is constructive to point out that for an oblique circular cone, (one in which the axis is not perpendicular to the base; and many students graduate from HS without ever having been made aware that such types of cones exist, much less those whose base is non-circular) there is more than one plane which will cut a circle. A cutting plane parallel to the base is one type, and of course by now you suspect that the other type is a plane anti-parallel to the base.

*image from Paramanand's Math Notes

Maybe soon I'll write about the anti-CENTER.

Addendum: After a comment by 1SAEED9, I realized that the proof that the tangent at the vertex opposite a side is antiparallel to that side.

It is easy to see that angles DBA and BCA both subtend the same arc, and thus are the same measure. By using the fact that CBA, DBA and EBD add up to 180 degrees, and the three interior angles of the triangle CBA, CAB, and BCA also add up to 180 degrees. Since BCA and DBA are congruent, when we subtract these from each side, and remove CBA from both sides we are left with the fact that EBD must be congruent to CAB. We can repeat this process on the opposite angle and we are done. Easier than I imagined.

Sunday, 2 December 2012

Oops !!! Can You Find the Error?

The gracious Bob Mrotek often takes the time to keep me informed of mathematical curiosities that show up on the web that I might have missed, for which I am truly grateful.

Recently he sent me a picture of a clock in which each hour was represented by a mathematical relationship involving three nines. This is similar to the four-fours type of problem that teachers often use, about which I gave some history here.

Bob informed me that the choice of three nines comes from the fact that the group which had the clock face designed were members of the "Triple Nine Society"...a group that consists of the members that score at or above the 99.9th percentile on IQ tests". (The fact that Bob is aware of this little piece of information tells me that he obviously runs with much higher intellectual group than I.)

I will save the Society's clock for last, as a spoiler to a mild puzzle. Bob had sent me the clock because he first noticed a "copy" of the clock, obviously not from the same group. Which contains a mistake. It reminded me of questions I used to test (torture) my advanced math students with in which I would write several expressions of which one was NOT true; then sing the little ditty from Sesame Street,

"One of these things is not like the others, which one isn't the same?"

Well, this clock that Bob sent me has twelve expressions that demonstrate the hour, but one of them is "not like the others." It has an error. Time yourself, how long does it take to find the error.

Here is the "copy"

If you need a hint, or to confirm your solution, the true Triple Nine Society clock is below. Check and see if the one you thought was wrong is right on this one.

And Thanks again to Bob Mrotek for the heads-up.

Recently he sent me a picture of a clock in which each hour was represented by a mathematical relationship involving three nines. This is similar to the four-fours type of problem that teachers often use, about which I gave some history here.

Bob informed me that the choice of three nines comes from the fact that the group which had the clock face designed were members of the "Triple Nine Society"...a group that consists of the members that score at or above the 99.9th percentile on IQ tests". (The fact that Bob is aware of this little piece of information tells me that he obviously runs with much higher intellectual group than I.)

I will save the Society's clock for last, as a spoiler to a mild puzzle. Bob had sent me the clock because he first noticed a "copy" of the clock, obviously not from the same group. Which contains a mistake. It reminded me of questions I used to test (torture) my advanced math students with in which I would write several expressions of which one was NOT true; then sing the little ditty from Sesame Street,

"One of these things is not like the others, which one isn't the same?"

Well, this clock that Bob sent me has twelve expressions that demonstrate the hour, but one of them is "not like the others." It has an error. Time yourself, how long does it take to find the error.

Here is the "copy"

If you need a hint, or to confirm your solution, the true Triple Nine Society clock is below. Check and see if the one you thought was wrong is right on this one.

And Thanks again to Bob Mrotek for the heads-up.

Saturday, 1 December 2012

Friday, 30 November 2012

Thursday, 29 November 2012

Wednesday, 28 November 2012

Tuesday, 27 November 2012

Monday, 26 November 2012

Sunday, 25 November 2012

Saturday, 24 November 2012

Friday, 23 November 2012

Thursday, 22 November 2012

Wednesday, 21 November 2012

Tuesday, 20 November 2012

Monday, 19 November 2012

Sunday, 18 November 2012

Saturday, 17 November 2012

Friday, 16 November 2012

Thursday, 15 November 2012

Wednesday, 14 November 2012

New Solution to Very Old Math Problem

A few months ago I wrote about the history of one of the older mathematical problems in existence, The River-crossing problems such as the cabbage/goat/wolf problem.

The problems date back to the 800's and Alcuin of York.

Amazingly, a brand new solution to the problem has just been discovered and posted here. enjoy!

Tuesday, 13 November 2012

Monday, 12 November 2012

Sunday, 11 November 2012

Saturday, 10 November 2012

Friday, 9 November 2012

Thursday, 8 November 2012

Wednesday, 7 November 2012

Tuesday, 6 November 2012

Monday, 5 November 2012

Sunday, 4 November 2012

Saturday, 3 November 2012

Friday, 2 November 2012

Thursday, 1 November 2012

Iterating Strings of Primes

I think such an exploration can be a great independent exploration for interested and capable students, and so I will expand a little on the question.

It is obvious, I think that extending 11 by adding more ones will quickly produce a multiple of three. And 1111 and 11111 as well as 1111111 are all composite. But extending 13 to 133, 1333, and 1333 are not prime (although they are all semi-primes (two prime factors) and students might wonder how far, or how often that is true for more 3's) . 17 undergoes a similar failure to produce primes, although 1777 is prime. But 19, 199, and 1999 are all prime. Unfortunately, 19999 is 7 x 2857.

With 23, 233, 2333, 23333 are again all prime. Is it possible for this method to produce more than four primes in a row. We might look instead at extending the first digit, 113 1113, etc for 13, and similarly for the first digits of other two digit numbers. Conversly, they might explore how many times the digit might be extended before another primes is found... will 1333..3 EVER be prime?... and will there always be another prime if more digits are added?

For more than two digits there are extended options. We could repeat the last digit, extending xyz to xyzz, or alternately replicating the last two digits, xyzyz.

For students working on divisibility schemes, testing primes, etc this is probably great practice, and for others, it cold just be good fun.

Wednesday, 31 October 2012

Tuesday, 30 October 2012

Reposting, Roy G Biv

I like to post this one at least once each year. Enjoy

----------------------------------------------------------

Every kid learns it in science class.. (or on "They Might be Giants", see below) the colors of the rainbow (visible light spectrum) are given by the mnemonic ROY G BIV for Red, Orange, Yellow, Green, Blue, Indigo, and Violet.

But really, before you knew the mnemonic if someone asked, "What colors do you see?" what would you have said?

I don't know if anyone has ever tested young kids before they are exposed to science classes, but it seems it used to be common to describe four colors, Red, Yellow, Green, and Blue... So why do we see (or why are we told to see) seven?

Turns out, it was old Isaac Newton, and his dedication to the ancient Greek ideas. Here is a clip from a blog by Patricia Fara, a senior lecturer at Cambridge, and author of "Science: A Four Thousand Year History" at the Nature web site.

I found this on Wikipedia: "In Classical Antiquity, Aristotle had claimed there was a fundamental scale of seven basic colors. In the Renaissance, several artists tried to establish a new sequence of up to seven primary colors from which all other colors could be mixed. In line with this artistic tradition, Newton divided his color circle, which he constructed to explain additive color mixing, into seven colors.[1] His color sequence with the unusual color indigo is still kept alive today by the Roy G. Biv mnemonic. Originally he used only five colors, but later he added orange and indigo, in order to match the number of musical notes in the major scale."

So I can't find out who first came up with the mnemonic, but I did find a song about it...lyrics are here. And Aaron Wagner sent me a link to a video of a different ROY G BIV song by "They Might Be Giants" that his four-year old highly endorses. Thanks to Aaron and his child for the tip.

They Might Be Giants - Roy G Biv from They Might Be Giants on Vimeo.

----------------------------------------------------------

Every kid learns it in science class.. (or on "They Might be Giants", see below) the colors of the rainbow (visible light spectrum) are given by the mnemonic ROY G BIV for Red, Orange, Yellow, Green, Blue, Indigo, and Violet.

But really, before you knew the mnemonic if someone asked, "What colors do you see?" what would you have said?

I don't know if anyone has ever tested young kids before they are exposed to science classes, but it seems it used to be common to describe four colors, Red, Yellow, Green, and Blue... So why do we see (or why are we told to see) seven?

Turns out, it was old Isaac Newton, and his dedication to the ancient Greek ideas. Here is a clip from a blog by Patricia Fara, a senior lecturer at Cambridge, and author of "Science: A Four Thousand Year History" at the Nature web site.

Consider Isaac Newton. He believed so firmly in the Greek idea of a harmonic universe that he divided the rainbow into seven colours to correspond with the musical scale. Before then, although opinions varied, artists mostly showed rainbows with four colours. It is, of course, impossible to make any objective decision about the correct number, because the spectrum of visible light varies continuously: there is no sharp cut-off between bands of different colours, so how you think about a rainbow affects how you see it. Be honest - can you tell the difference between blue, indigo and violet?

I found this on Wikipedia: "In Classical Antiquity, Aristotle had claimed there was a fundamental scale of seven basic colors. In the Renaissance, several artists tried to establish a new sequence of up to seven primary colors from which all other colors could be mixed. In line with this artistic tradition, Newton divided his color circle, which he constructed to explain additive color mixing, into seven colors.[1] His color sequence with the unusual color indigo is still kept alive today by the Roy G. Biv mnemonic. Originally he used only five colors, but later he added orange and indigo, in order to match the number of musical notes in the major scale."

So I can't find out who first came up with the mnemonic, but I did find a song about it...lyrics are here. And Aaron Wagner sent me a link to a video of a different ROY G BIV song by "They Might Be Giants" that his four-year old highly endorses. Thanks to Aaron and his child for the tip.

They Might Be Giants - Roy G Biv from They Might Be Giants on Vimeo.

On This Day in Math - October 30

'Mathematics is the science that uses easy words for hard ideas.'

~ Edward Kasner The 304th day of the year; 304 is the sum of six consecutive primes starting with 41, and also the sum of eight consecutive primes starting with 23. (and for those who keep up with such things, it is also the record number of wickets taken in English cricket season by Tich Freeman in 1928.)

1613 Kepler married his second wife (the first died of typhus). She was fifth on his slate of eleven candidates. The story that he used astrology in the choice is doubtful.*VFR Kepler married the 24-year-old Susanna Reuttinger. He wrote that she, "won me over with love, humble loyalty, economy of household, diligence, and the love she gave the stepchildren.According to Kepler's biographers, this was a much happier marriage than his first. *Wik

1710 William Whiston, whom Newton had arranged to succeeded him as Lucasian Professor at Cambridge in 1701, was deprived of the chair and driven from Cambridge for his unorthodox religious views. Whiston was removed from his position at Cambridge, and denied membership in the Royal Society for his “heretical” views. He took the “wrong” side in the battle between Arianism (a unitarian view) and the Trinitarian view, but his brilliance still made the public attend to his proclamations. When he predicted the end of the world by a collision with a comet in October 16th of 1736 the Archbishop of Canterbury had to issue a denial to calm the panic (VFR put it this way, "it is not acceptable to be a unitarian at the College of the Whole and Undivided Trinity".

His translation of the works of Flavius Josephus may have contained a version of the famous Josephus Problem, and in 1702 Whiston's Euclid discusses the classic problem of the Rope Round the Earth, (if one foot of additional length is added, how high will the rope be). I am not sure of the dimensions in Whiston's problem, and would welcome input, I have searched the book and can not find the problem in it, but David Singmaster has said it is there, and he is not an easy source to reject. It is said that Ludwig Wittgenstein was fascinated by the problem and used to pose it to students regularly.

1735 Ben Franklin published “On the Usefulness of Mathematics,” his only published article on mathematics. *VFR

1826 Abel presented a paper to the French Academy of Science that was ignored by Cauchy, who was to serve as referee. The paper was published some twenty years later.*VFR

In 1937, the closest approach to the earth by an asteroid, Hermes, was measured to be 485,000 miles, which, to an astronomer, is a mere hair's width (asteroid now lost).*TIS

1945 The first conference on Digital Computer Technique was held at MIT. The conference was sponsored by the National Research Council, Subcommittee Z on Calculating Machines and Computation. Attended by the Whirlwind team,(The Whirlwind computer was developed at the Massachusetts Institute of Technology. It is the first computer that operated in real time, used video displays for output, and the first that was not simply an electronic replacement of older mechanical systems) it influenced the direction of this computer. *CHM

1978 Laura Nickel and Curt Noll, eighteen year old students at California State at Hayward, show that 221,701 − 1 is prime. This was the largest prime known at that time. *VFR (By Feb of the next year, Noll had found another, 223209-1. By April, another larger Prime had been found.)

1992 The Vatican announced that a 13-year investigation into the Catholic Church’s condemnation of Galileo in 1633 will come to an end and that Galileo was right: The Copernican Theory, in which the Earth moves around the Sun, is correct and they erred in condemning Galileo. *New York Times for 31 October 1992.

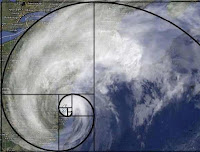

2012 After Hurricane Sandy came ashore in New Jersey on the 29th, the huge weather system was captured with an overlay to emphasize it's Fibonacci-like structure. *HT to Bob Mrotek for sending me this image

1840 Joseph Jean Baptiste Neuberg (30 Oct 1840 in Luxembourg City, Luxembourg - 22 March 1926 in Liège, Belgium) Neuberg worked on the geometry of the triangle, discovering many interesting new details but no large new theory. Pelseneer writes, "The considerable body of his work is scattered among a large number of articles for journals; in it the influence of A Möbius is clear." *SAU

1844 George Henri Halphen (30 October 1844, Rouen – 23 May 1889, Versailles) was a French mathematician. He did his studies at École Polytechnique (X 1862). He was known for his work in geometry, particularly in enumerative geometry and the singularity theory of algebraic curves, in algebraic geometry. He also worked on invariant theory and projective differential geometry.*Wik

1863 Stanislaw Zaremba (3 Oct 1863 in Romanowka, Poland - 23 Nov 1942 in Kraków, Poland) From very unpromising times up to World War I, with the recreation of the Polish nation at the end of that war, Polish mathematics entered a golden age. Zaremba played a crucial role in this transformation. Much of Zaremba's research work was in partial differential equations and potential theory. He also made major contributions to mathematical physics and to crystallography. He made important contributions to the study of viscoelastic materials around 1905. He showed how to make tensorial definitions of stress rate that were invariant to spin and thus were suitable for use in relations between the stress history and the deformation history of a material. He studied elliptic equations and in particular contributed to the Dirichlet principle.*SAU

1906 Andrei Nikolaevich Tikhonov (30 Oct 1906 in Gzhatska, Smolensk, Russia - November 8, 1993, Moscow) Tikhonov's work led from topology to functional analysis with his famous fixed point theorem for continuous maps from convex compact subsets of locally convex topological spaces in 1935. These results are of importance in both topology and functional analysis and were applied by Tikhonov to solve problems in mathematical physics.

The extremely deep investigations of Tikhonov into a number of general problems in mathematical physics grew out of his interest in geophysics and electrodynamics. Thus, his research on the Earth's crust lead to investigations on well-posed Cauchy problems for parabolic equations and to the construction of a method for solving general functional equations of Volterra type.

Tikhonov's work on mathematical physics continued throughout the 1940s and he was awarded the State Prize for this work in 1953. However, in 1948 he began to study a new type of problem when he considered the behaviour of the solutions of systems of equations with a small parameter in the term with the highest derivative. After a series of fundamental papers introducing the topic, the work was carried on by his students.

Another area in which Tikhonov made fundamental contributions was that of computational mathematics. Under his guidance many algorithms for the solution of various problems of electrodynamics, geophysics, plasma physics, gas dynamics, ... and other branches of the natural sciences were evolved and put into practice. ... One of the most outstanding achievemnets in computational mathematics is the theory of homogeneous difference schemes, which Tikhonov developed in collaboration with Samarskii.

In the 1960s Tikhonov began to produce an important series of papers on ill-posed problems. He defined a class of regularisable ill-posed problems and introduced the concept of a regularising operator which was used in the solution of these problems. Combining his computing skills with solving problems of this type, Tikhonov gave computer implementations of algorithms to compute the operators which he used in the solution of these problems. Tikhonov was awarded the Lenin Prize for his work on ill-posed problems in 1966. In the same year he was elected to full membership of the USSR Academy of Sciences.*SAU

1907 Harold Davenport (30 Oct 1907 in Huncoat, Lancashire, England - 9 June 1969 in Cambridge, Cambridgeshire, England) Davenport worked on number theory, in particular the geometry of numbers, Diophantine approximation and the analytic theory of numbers. He wrote a number of important textbooks and monographs including The higher arithmetic (1952)*SAU

1946 William Paul Thurston (October 30, 1946 – August 21, 2012) American mathematician who was awarded the Fields Medal in 1983 for his work in topology. As early as his Ph.D. thesis entitled Foliations of 3-manifolds which are circle bundles (1972) that showed the existence of compact leaves in foliations of 3-manifolds, Thurston had been working in the field of topology. In the following years, Thurston's contributions to the field of foliations were recognized to be of considerable depth, set apart by their originality. This was also true of his subsequent work on Teichmüller space. *TIS

1626 Willebrord van Royen Snell (13 June 1580 in Leiden, Netherlands - 30 Oct 1626 in Leiden, Netherlands) Snell was a Dutch mathematician who is best known for the law of refraction, a basis of modern geometric optics; but this only become known after his death when Huygens published it. His father was Rudolph Snell (1546-1613), the professor of mathematics at Leiden. Snell also improved the classical method of calculating approximate values of π by polygons which he published in Cyclometricus (1621). Using his method 96 sided polygons gives π correct to 7 places while the classical method yields only 2 places. Van Ceulen's 35 places could be found with polygons of 230 sides rather than 262. In fact Van Ceulen's 35 places of π appear in print for the first time in this book by Snell. *SAU

1631 Michael Mästin (30 Sept 1550 in Göppingen, Baden-Würtemberg, Germany

- 30 Oct 1631 in Tübingen, Baden-Würtemberg, Germany) astronomer who was Kepler's teacher and who publicized the Copernican system. Michael Mästin was a German astronomer who was Kepler's teacher and who publicised the Copernican system. Perhaps his greatest achievement (other than being Kepler's teacher) is that he was the first to compute the orbit of a comet, although his method was not sound. He found, however, a sun centered orbit for the comet of 1577 which he claimed supported Copernicus's heliocentric system. He did show that the comet was further away than the moon, which contradicted the accepted teachings of Aristotle. Although clearly believing in the system as proposed by Copernicus, he taught astronomy using his own textbook which was based on Ptolemy's system. However for the more advanced lectures he adopted the heliocentric approach - Kepler credited Mästlin with introducing him to Copernican ideas while he was a student at Tübingen (1589-94).*SAU

1739 Leonty Filippovich Magnitsky (June 9, 1669, Ostashkov – October 30, 1739, Moscow) was a Russian mathematician and educator. From 1701 and until his death, he taught arithmetic, geometry and trigonometry at the Moscow School of Mathematics and Navigation, becoming its director in 1716. In 1703, Magnitsky wrote his famous Arithmetic (Арифметика; 2,400 copies), which was used as the principal textbook on mathematics in Russia until the middle of the 18th century. This book was more an encyclopedia of mathematics than a textbook because most of its content was communicated for the first time in Russian literature. In 1703, Magnitsky also produced a Russian edition of Adriaan Vlacq's log tables called Таблицы логарифмов и синусов, тангенсов и секансов (Tables of Logarithms, Sines, Tangents, and Secants). Legend has it that Leonty Magnitsky was nicknamed Magnitsky by Peter the Great, who considered him a "people's magnet" *Wik

1805 Ormbsy MacKnight Mitchel (July 20, 1805 – October 30, 1862) American astronomer and major general in the American Civil War.

A multi-talented man, he was also an attorney, surveyor, and publisher. He is notable for publishing the first magazine in the United States devoted to astronomy. Known in the Union Army as "Old Stars", he is best known for ordering the raid that became famous as the Great Locomotive Chase during the Civil War. He was a classmate of Robert E. Lee and Joseph E. Johnston at West Point where he stayed as assistant professor of mathematics for three years after graduation.

The U.S. communities of Mitchell, Indiana, Mitchelville, South Carolina, and Fort Mitchell, Kentucky were named for him. A persistently bright region near the Mars south pole that was first observed by Mitchel in 1846 is also named in his honor. *TIA